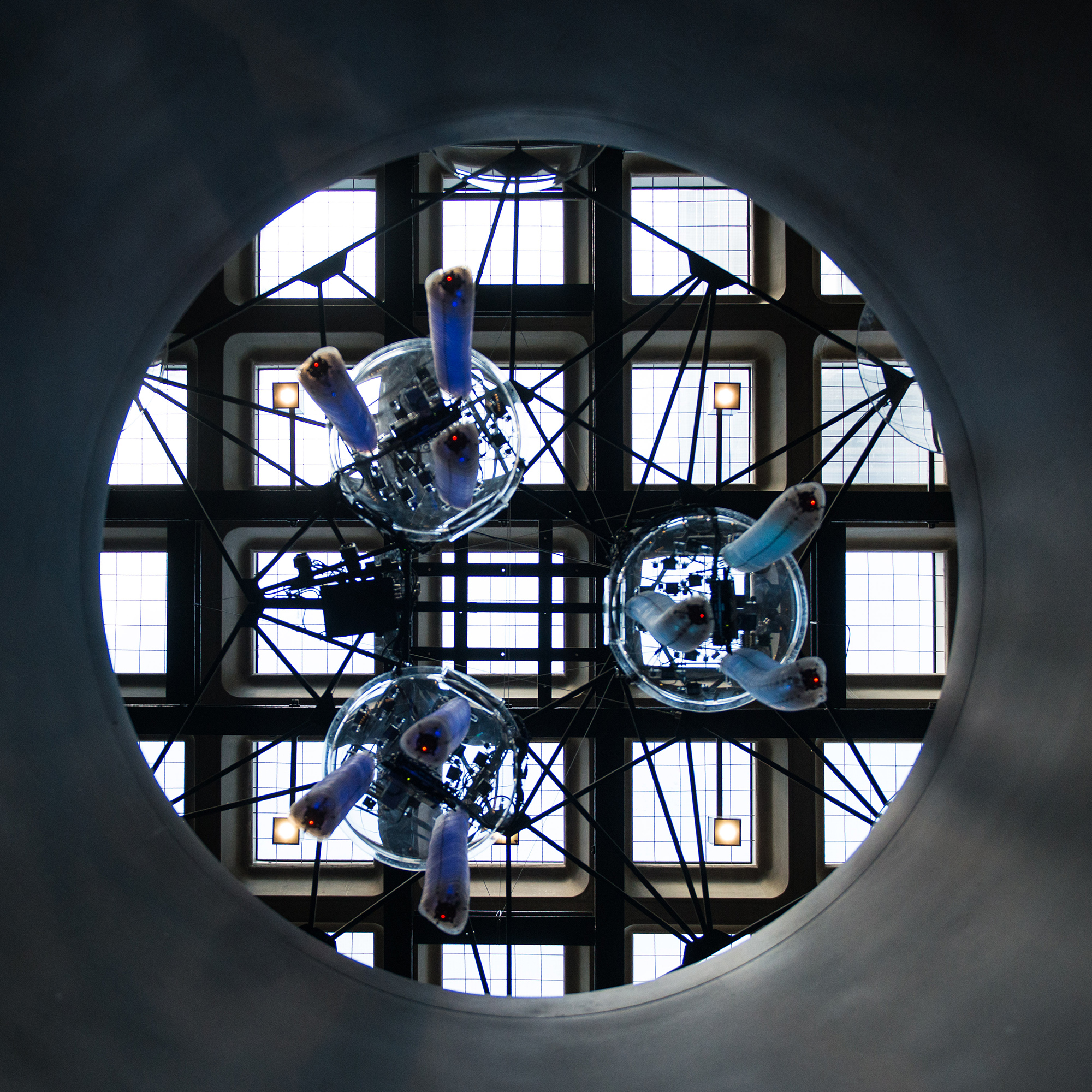

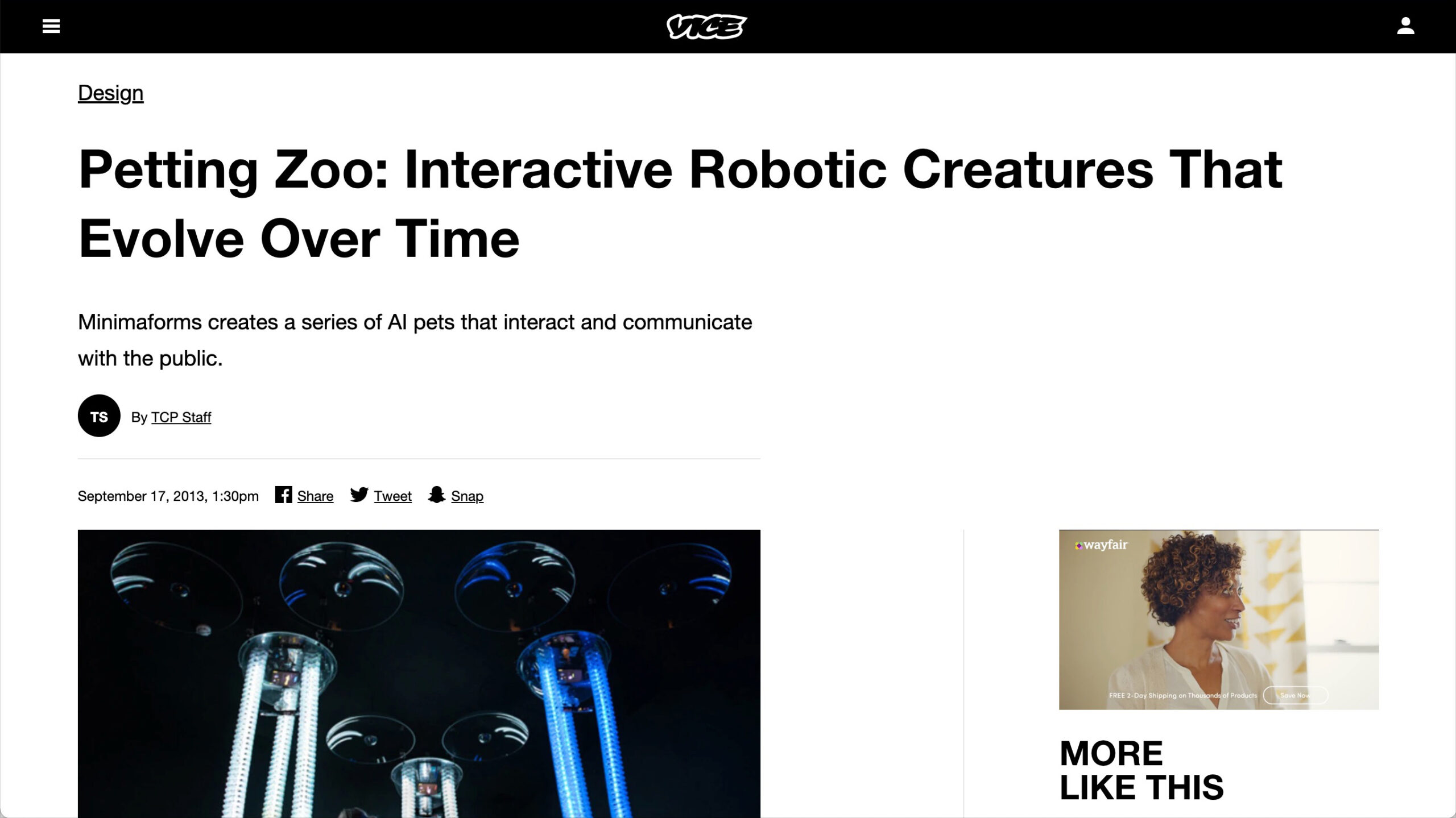

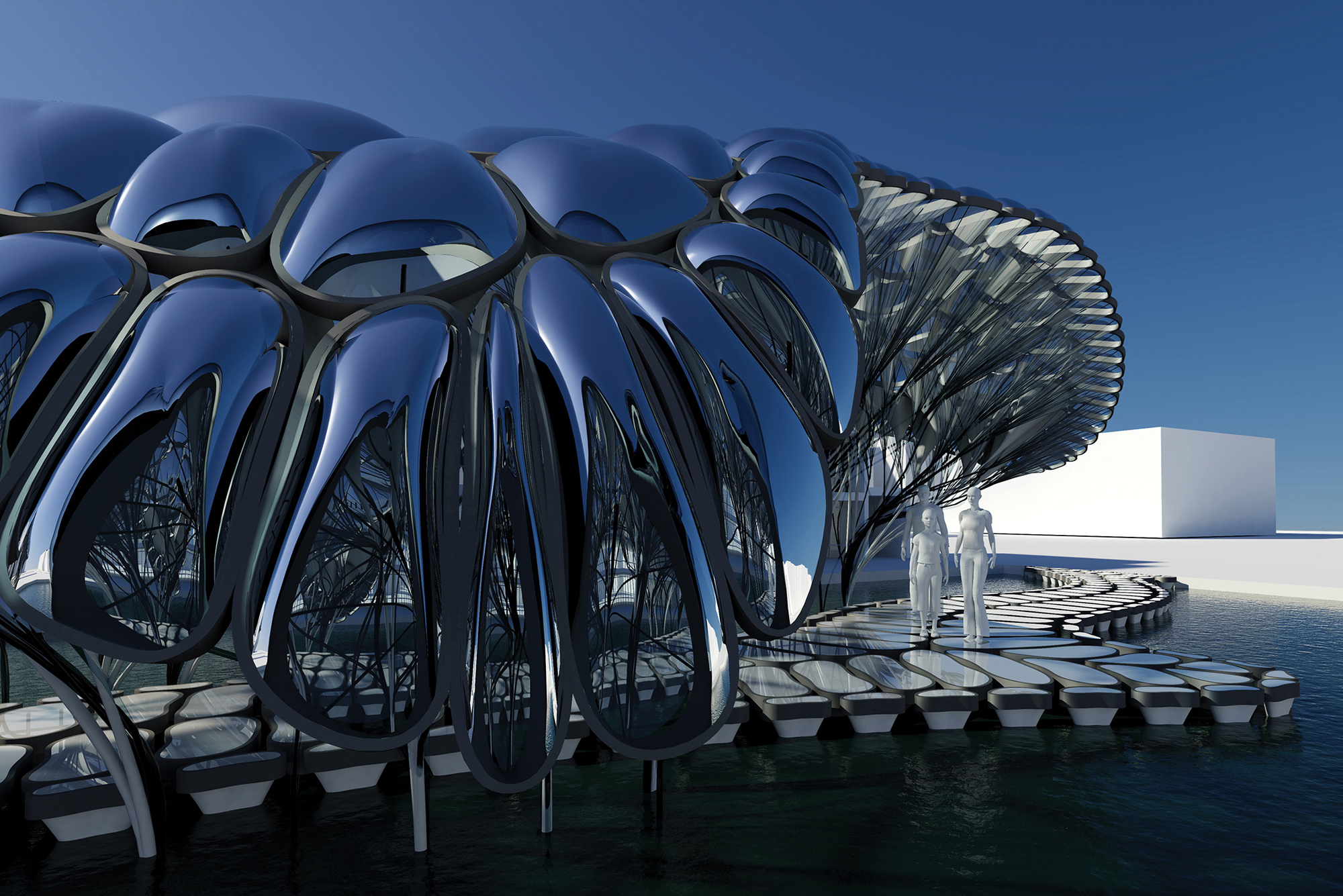

Petting Zoo, Barbican Centre, London

A generative robotic installation populated by inquisitive and artificially intelligent creatures, which respond to human engagement.

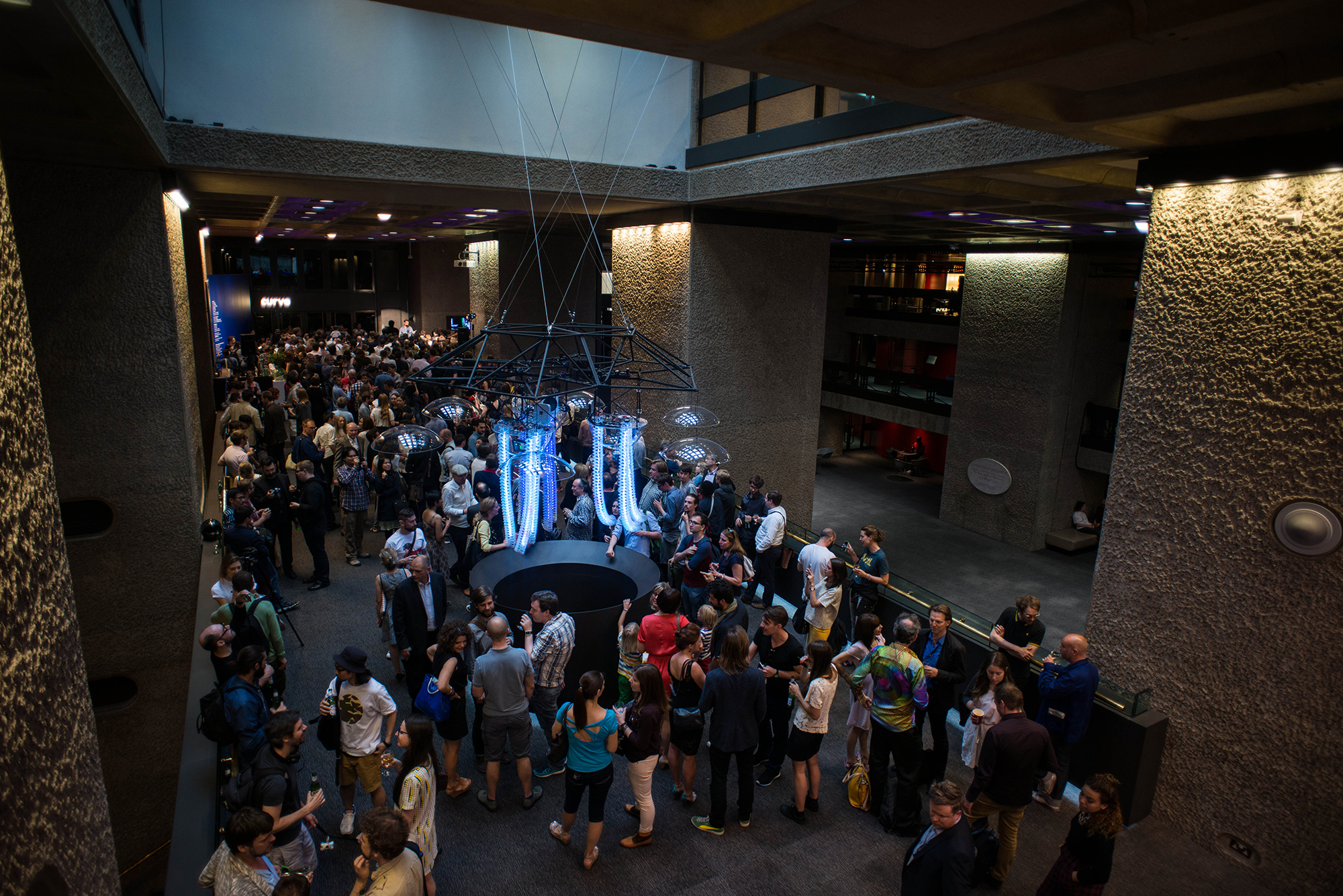

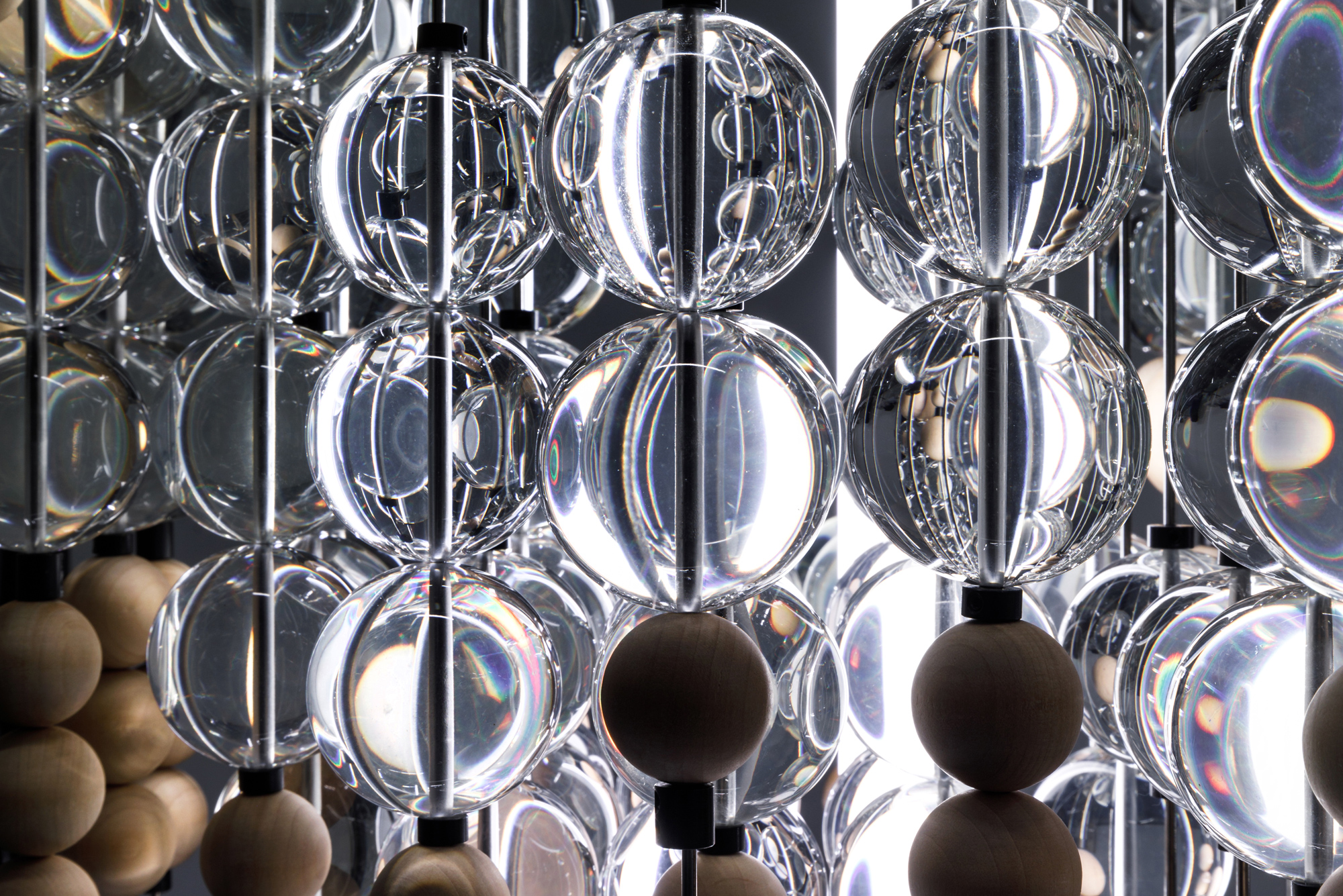

Petting Zoo is conceived as a speculative robotic environment populated by artificial intelligent creatures that are designed with the capacity to learn and explore behaviours through interaction with participants. Within this immersive installation, interaction with the pets fosters human curiosity, play, forging intimate exchanges that are emotive, evolving over time and enabling communication between people and their environment. The installation exhibits life-like attributes through forms of conversational interaction establishing communication with users that are emotive and sensorial. Social and synthetic forms of systemic interactions allow the pets to engage and evolve their behaviours over time exhibiting features and personalities that are formed through their interactions with the general public. Pets interact and stimulate participation with users through the use of animate behaviours communicated through visual, haptic and aural communication. Pet interactions are stimulated through interaction with human users or between other pets within the population. Using a real-time camera-tracking system that can locate people and detect gesture and activity each pet has the capacity to process data so that they can learn and explore different behaviours by interacting with the public and each other. Over the course of the exhibition, unique personalities are developed through human interaction enabling intimate and immediate exchanges that are playful, emotive and evolving.

These experiments are an exploration in artificial intelligence that prompts us to think about how we can coevolve and inhabit our future human-machine environments. Moving beyond robotics understood as tools of production, Petting Zoo examines the emotive and behavioural features of our engagement with them and each other. Over time and in response to habit the Pets could be argued to develop a form of “personality”. As orientation and environmental features of installations cause diverse interaction scenarios for each Pet, over time they form unique capacity to exhibit varied behaviours. The personalities are evolved through a process of self-observation of the system and open up territories to further explore how we can design systems that evolve with us?

Support structure engineered by AKT II.

Selected Press

The New York Times, Exhibitions, Tracking the Digital Revolution, From Pong to ‘Gravity’

Wired, Design, Six Projects from the Frontier of Digital Art

Wired, Technology, A Digital Petting Zoo is a Template for Homes that Learn to Adapt

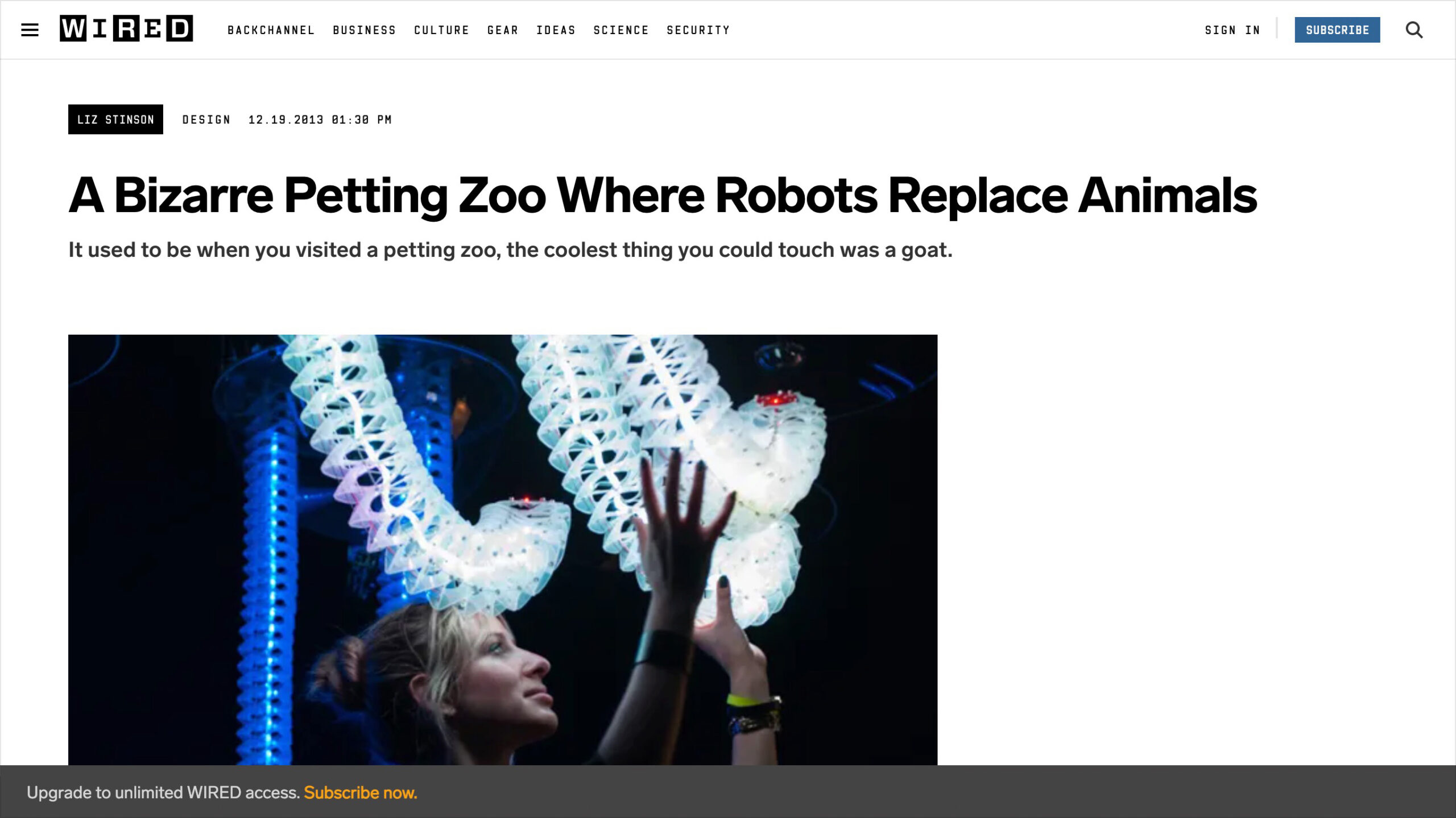

Wired, Design, A Bizarre Petting Zoo Where Robots Replace Animals

CNN, Touch it You Know you Want to, The Hands on World of Digital Art

ABC News, 'Petting Zoo' Exhibit Lets Visitors Interact with Intelligent Robot Pets

CBS News, Digital Tech get Creative

Smithsonian, Seven Ways Technology is Changing How Art is Made

Vice, Petting Zoo: Interactive Robotic Creatures That Evolve Over Time

The Verge, Robotic Petting Zoo Replaces Furry Animals with Inquisitive Plastic Tentacles

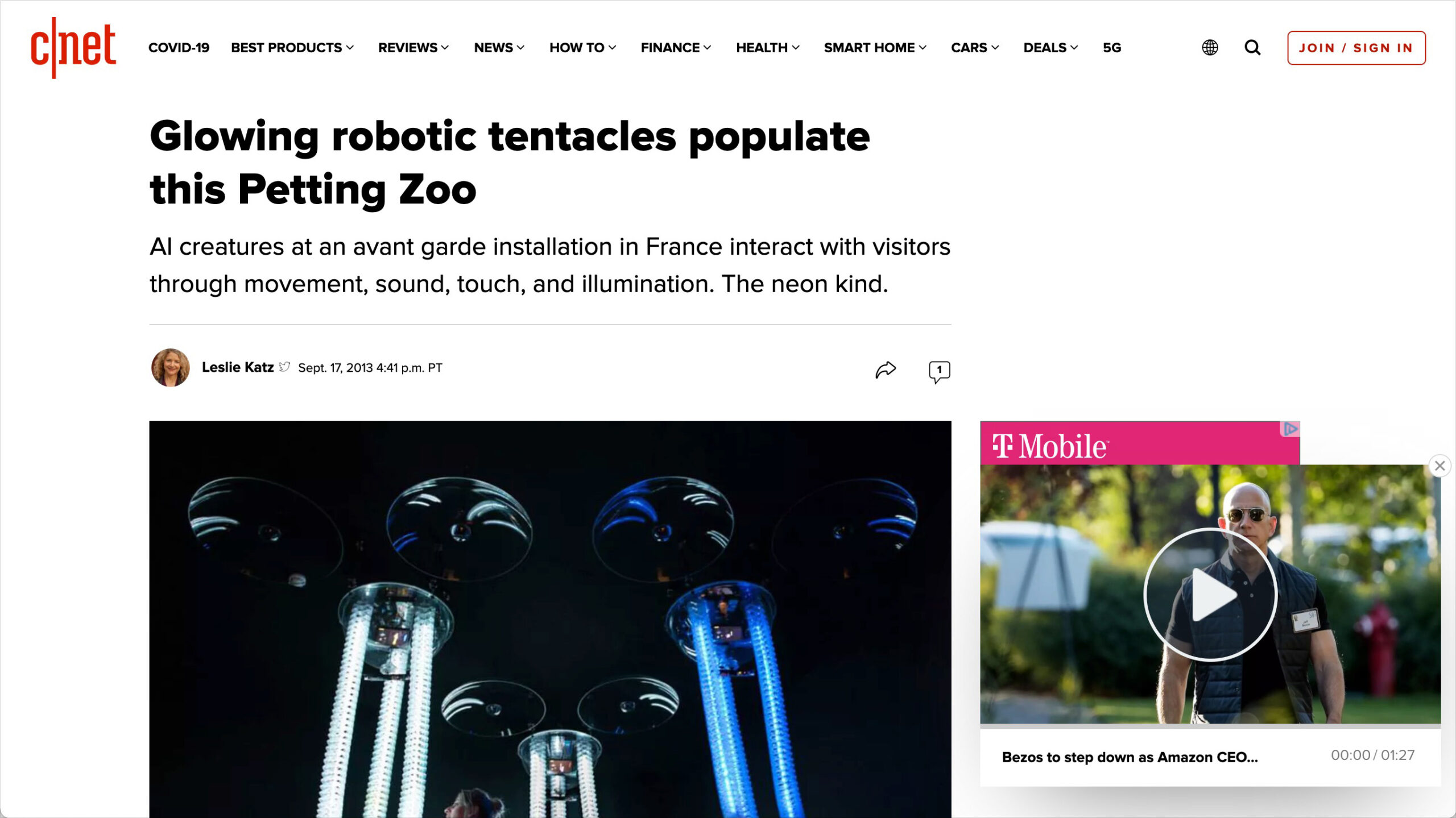

CNET, Glowing Robotic Tentacles Populate this Petting Zoo of AI Creatures

Daily Mail, The Petting Zoo Full of Robots

Mashable, Robotic Petting Zoo Replaces Animals with Plastic Tentacles

Creative Applications, Artificial creatures designed to learn and explore

The Royal Academy, RA Recommends Our Pick of this Weeks Art Events June - 3 July

EXHIBITED

Deutsches Filminstitut & Filmmuseum, Frankfurt, Germany

Guangdong Science Centre, Guangzhou, China

National Museum of Science and Technology (Tekniska Museet), Stockholm, Sweden

Barbican Centre Main Foyer, London, England

Frac Centre, Archilab Naturalizing Architecture Exhibition

Leonardo Da Vinci Science and Technology Museum, Pet 2.0 Milan, Italy

Onassis Cultural Center, Athens, Greece

Zorlu Centre, Istanbul, Turkey

TEDxAcademy Athens exhibition Athens, Greece

Selected Works

The Order of TimeProject type

Of and In the WorldRobotic Sculpture

Memory Cloud DetroitProject type

Dark MatterProject type

Emotive CityProject type

Nodeul IslandProject type

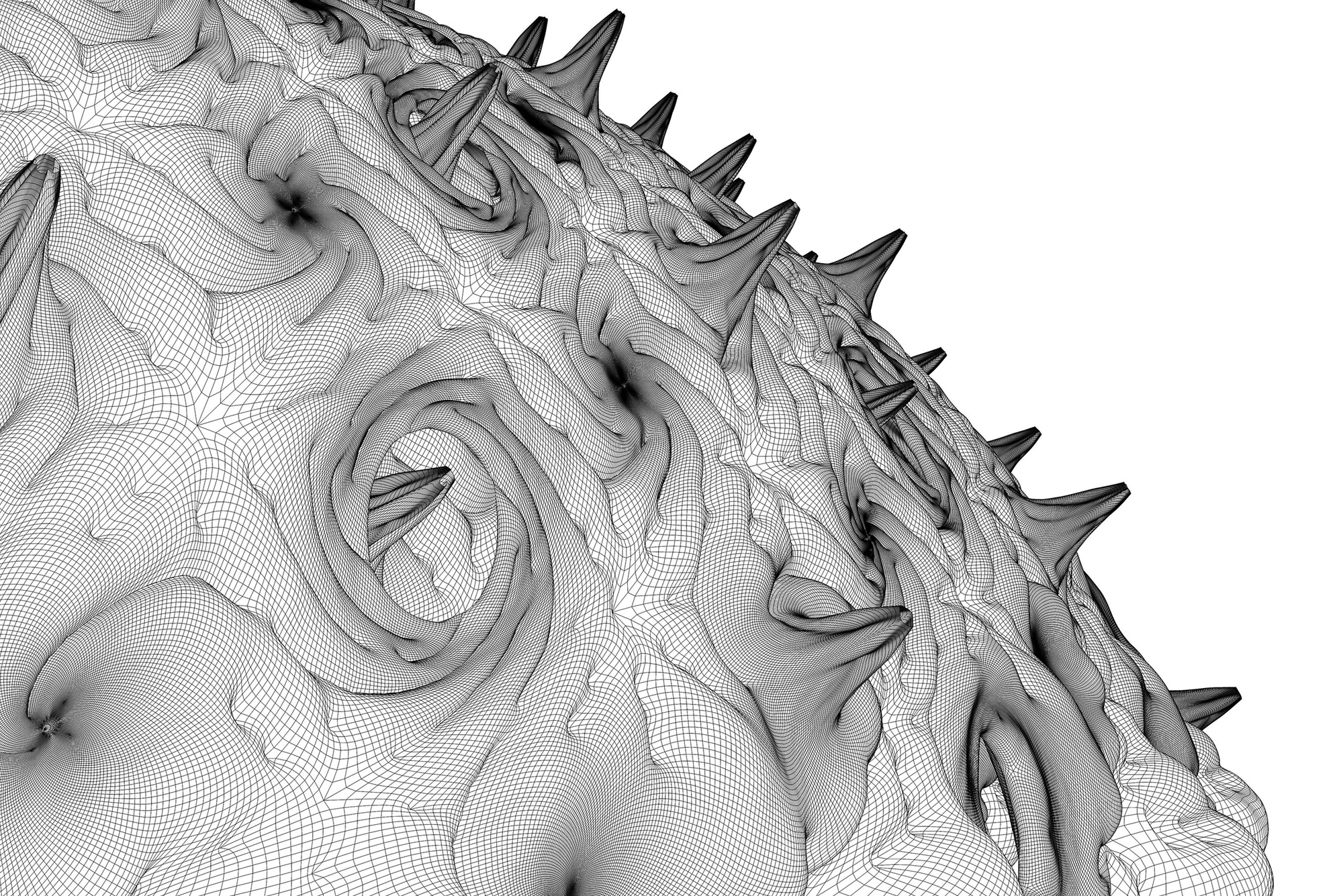

Endless Forms Most BeautifulProject type

Seeing MachineProject type

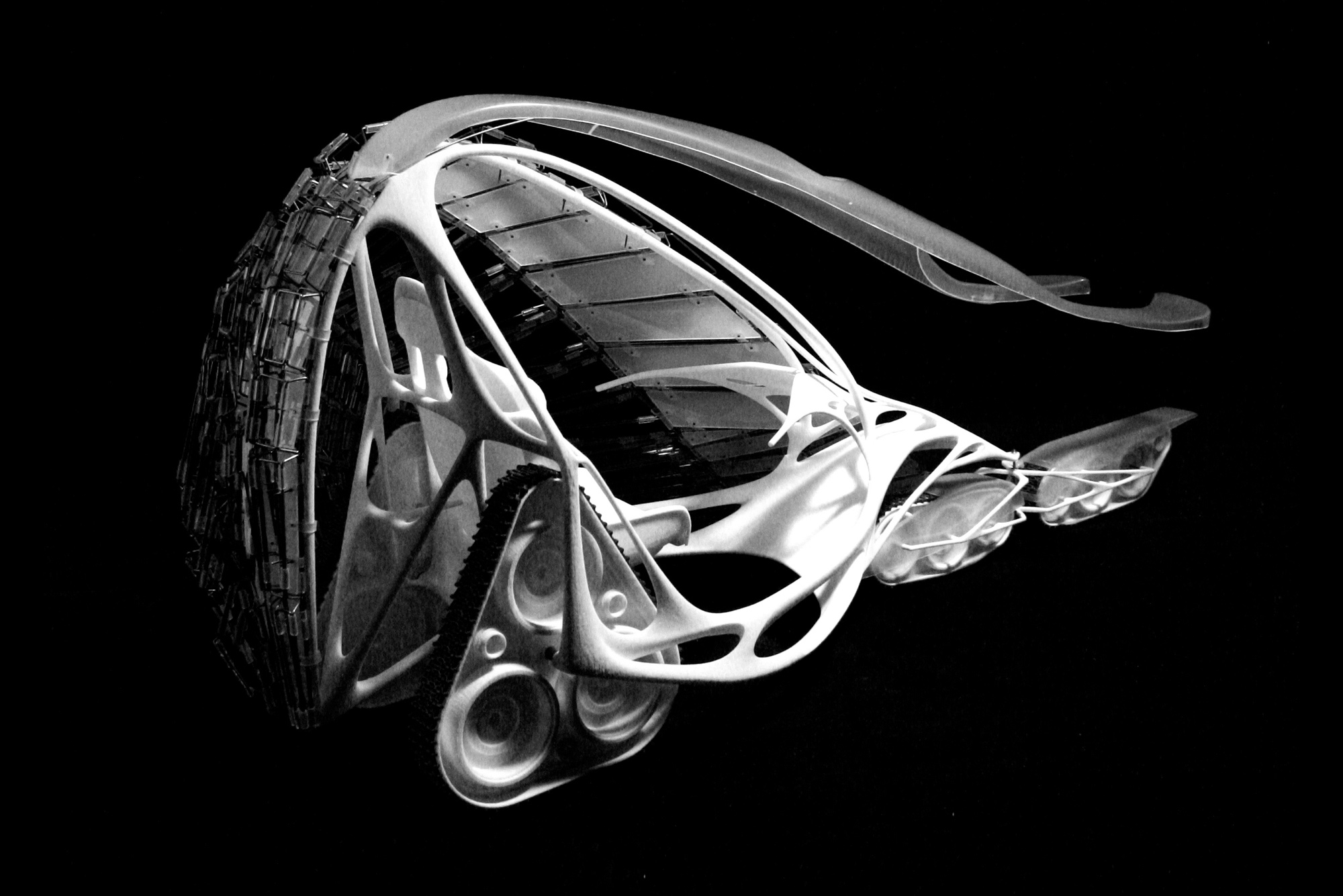

VehicleRobotic Sculpture

Soft CastProject type

FacebreederRobotic Sculpture

Becoming AnimalProject type